In part one, we looked at the nature of computers, quantum computing, and the definition of what it means for something be computable. This is all well and good for someone writing a present day or equivalent setting, but what about alien computers? How might they differ theoretically? What can be used to make them? Onward!

Reality. Current computers are primarily made out of silicon and various metals. They primarily function by manipulating electric current—not the presence of electrons, but the flow. The buildup of electrons is called a static charge, and is generally undesirable; in a typical electric circuit, electrons have roughly the same abundance at any given point, and we only care about whether or not they're moving. Most computers at some point convert this into either a physical state (flash memory works by trapping electrons) or a magnetic state (as in hard drives, floppy disks, and tapes).

It's increasingly common to see electric current converted into pulses of light as well (like in fibre-optic cabling), and it's highly probable that most electronic components in a computer could someday be replaced with optic circuitry. And as we discussed in the last article, a great deal of very intricate computations could probably be done very well by quantum computers. Contrary to many science fiction assumptions, however, none of this is very interesting to look at—it can all be etched into a standard printed circuit board, barely distinguishable from a normal computer.

Prior to the invention of integrated circuits (microchips—the little black rectangles) computers had to be a lot bulkier. This is why the mainframes of the 60s and 70s took up whole rooms for relatively little processing power—the functionality that can be fit into a one-square-inch die today required dozens of circuit board cards to implement, typically taking up the same space as (but weighing much more than!) a refrigerator. In this era, random-access memory was magnetic (ferrite core based), and hard drives were the size of washing machines, or larger, and offered storage measured in megabytes. This kind of computing is sufficient and necessary for a trip to the moon or another planet, and so is of great importance to science fiction. Anything more primitive is likely to be destroyed during takeoff or take far too long to provide the necessary course corrections. Indeed, the Apollo missions had such primitive transistor computers aboard.

Before this are the famous vacuum tube computers of the forties; the many little lightbulbs each serve as a logic gate. These were notoriously unreliable, hot, expensive, and huge—different sources estimate that a machine of several thousand tubes could burn out one vacuum tube anywhere from three times a day to once every three days, requiring the machine to be shut down while the trouble is located and remedied. Such machines are not practical for much more than basic programs, extrapolations, regressions, and tabulations, but are a great boon to a society only familiar with mechanical computation, and require so much resources to build that they are certain to grab the public imagination.

The first technology that we on Earth considered a computing machine was purely mechanical—typically crank-powered, numbers and operations would be input with a series of dials or levers, and results would be returned on dials or punched cards using gears and shafts to perform basic operations like addition and multiplication. The most advanced designs, such as Babbage's famous Analytical Engine, could accept meaningful programs to perform arbitrary calculations, and it is the design of such things that was at the forefront of the minds of the first theorists working with Turing machines.

Generalization. So what makes this all possible? Can a computer be made of sand or water? To build a general-purpose computer from the most basic parts, a medium needs to be able to express a few basic things. A complete set of logic gates or their equivalents, called functional completeness, is key; this is simply the ability to turn any pair of input signals into any other possible output signal. For example, if a medium is capable of expressing AND ("if the inputs are the same, produce an output signal, otherwise produce no signal") and NOT (a single-input gate that says "produce the opposite of what the input is"), then any conceivable mathematical operation can be performed in binary.

So to make a water computer, you'd need to be able to build these two gates—presumably with drainage for overflow and an extra source. You'd also need to put it on a sloped hill so the water flowed downward (or high pressure) and a signal repeater—i.e., a way to keep the water flowing—if you ever wanted to build a loop that went up hill. The same can obviously be said of any other liquid. (Indeed, hydraulic computers are an old running joke from a comic called the Crunchly Saga.) Realistically these may offer some resilience gains over other types of computers, but are most likely only to be popular amongst very primitive peoples.

Beyond. And after that, what? If photonic and quantum computers aren't enough for your setting, you're pretty much free to invent physics. Some authors like inscribing optical circuitry in crystals (popularized perhaps in Stargate SG-1), which isn't particularly infeasible but requires some clever laser work to execute; others cram machinery into hyperspace or subspace, either to exploit four-dimensional geometry or merely to keep things out of the way in the material universe. If you do go for a higher-dimensional route, make sure you're safe from local faster-than-light traffic.

Biology offers two vectors for computation that are currently being explored: DNA computing and wetware computing. Both are, unavoidably, much slower than any of the electronic computers we use today, but offer considerable perks in storage density and redundancy.

DNA computers work by treating the DNA itself as a program, where alterations in the structure such as clipping the molecule are used to process computations. Some such computers, called biomolecular computers, invoke other types of biological molecules like proteins to do some of this work, although a pure DNA computer is possible, and DNA has been proven capable of operating like a Turing machine. These kinds of computers cannot easily exist inside of an existing organism, however, because they use DNA in such a different manner from normal cells; they'd quickly be encrusted in modifier proteins or destroyed as foreign. The appeal of DNA computing is primarily in how easy it is to achieve parallelism (many options explored simultaneously) and perhaps in the density of information storage.

Wetware computers are computers that are built from actual neurons. These have been long plumbed by science fiction authors, and were first explored seriously in the late nineties. As a neuron can interpret incoming signals as excitatory or inhibitory, they are functionally complete. Since the result is a neural network, the analogue computer can also be tuned to handle data slightly differently over time. However, this also means that computations are rarely if ever exact, that data storage is likely to be inefficient or error-prone, and that any complex analysis is likely to take much longer than an electronic system. Like biomolecular computers, wetware offers great potential for parallelism, but they can be grown easily and coexist in normal organisms (barring unexpected evolution.)

That being said, however, it is not, actually, a bad thing that wetware computers are imprecise—research has shown that the errors our brains make are extremely important to our success as a species. This stands to be of great value to anyone who wants to describe a convincing primitive artificial intelligence. More on that in part three.

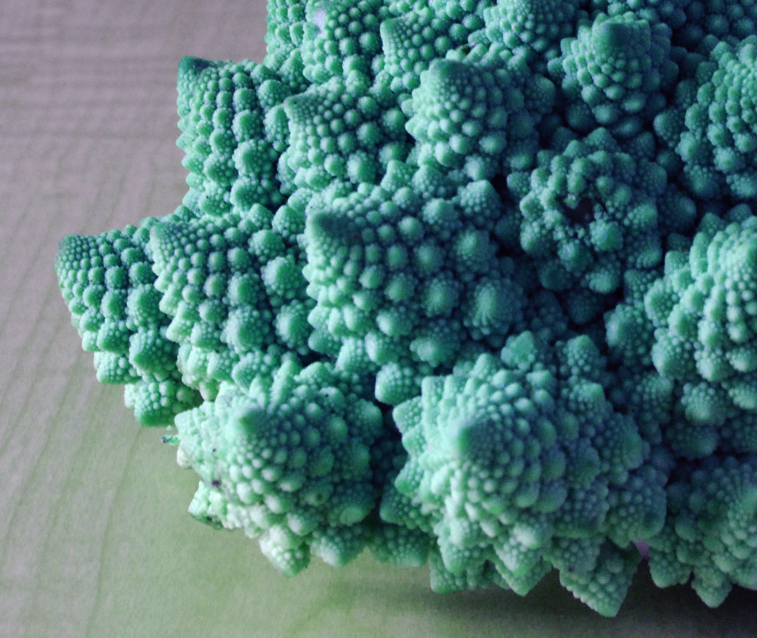

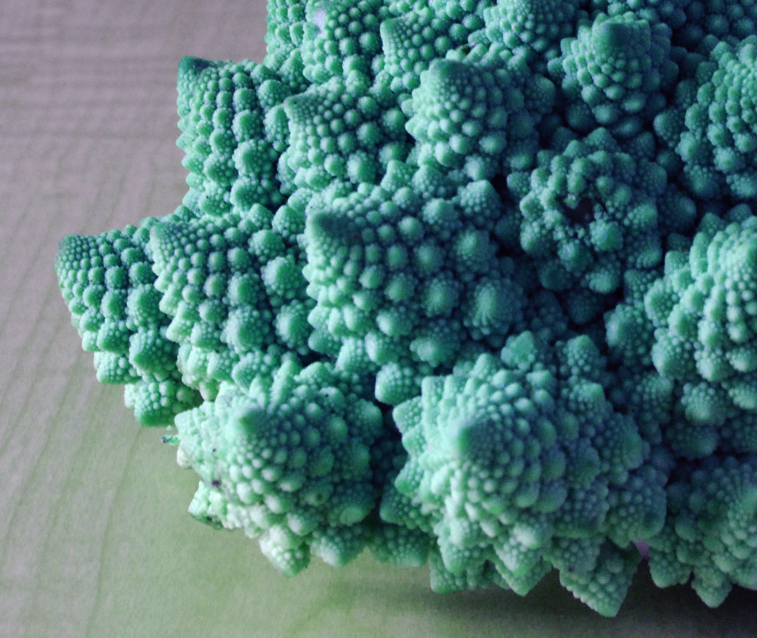

Romanesco broccoli, a naturally-occurring fractal.

Reality. Current computers are primarily made out of silicon and various metals. They primarily function by manipulating electric current—not the presence of electrons, but the flow. The buildup of electrons is called a static charge, and is generally undesirable; in a typical electric circuit, electrons have roughly the same abundance at any given point, and we only care about whether or not they're moving. Most computers at some point convert this into either a physical state (flash memory works by trapping electrons) or a magnetic state (as in hard drives, floppy disks, and tapes).

It's increasingly common to see electric current converted into pulses of light as well (like in fibre-optic cabling), and it's highly probable that most electronic components in a computer could someday be replaced with optic circuitry. And as we discussed in the last article, a great deal of very intricate computations could probably be done very well by quantum computers. Contrary to many science fiction assumptions, however, none of this is very interesting to look at—it can all be etched into a standard printed circuit board, barely distinguishable from a normal computer.

Prior to the invention of integrated circuits (microchips—the little black rectangles) computers had to be a lot bulkier. This is why the mainframes of the 60s and 70s took up whole rooms for relatively little processing power—the functionality that can be fit into a one-square-inch die today required dozens of circuit board cards to implement, typically taking up the same space as (but weighing much more than!) a refrigerator. In this era, random-access memory was magnetic (ferrite core based), and hard drives were the size of washing machines, or larger, and offered storage measured in megabytes. This kind of computing is sufficient and necessary for a trip to the moon or another planet, and so is of great importance to science fiction. Anything more primitive is likely to be destroyed during takeoff or take far too long to provide the necessary course corrections. Indeed, the Apollo missions had such primitive transistor computers aboard.

Before this are the famous vacuum tube computers of the forties; the many little lightbulbs each serve as a logic gate. These were notoriously unreliable, hot, expensive, and huge—different sources estimate that a machine of several thousand tubes could burn out one vacuum tube anywhere from three times a day to once every three days, requiring the machine to be shut down while the trouble is located and remedied. Such machines are not practical for much more than basic programs, extrapolations, regressions, and tabulations, but are a great boon to a society only familiar with mechanical computation, and require so much resources to build that they are certain to grab the public imagination.

The first technology that we on Earth considered a computing machine was purely mechanical—typically crank-powered, numbers and operations would be input with a series of dials or levers, and results would be returned on dials or punched cards using gears and shafts to perform basic operations like addition and multiplication. The most advanced designs, such as Babbage's famous Analytical Engine, could accept meaningful programs to perform arbitrary calculations, and it is the design of such things that was at the forefront of the minds of the first theorists working with Turing machines.

Generalization. So what makes this all possible? Can a computer be made of sand or water? To build a general-purpose computer from the most basic parts, a medium needs to be able to express a few basic things. A complete set of logic gates or their equivalents, called functional completeness, is key; this is simply the ability to turn any pair of input signals into any other possible output signal. For example, if a medium is capable of expressing AND ("if the inputs are the same, produce an output signal, otherwise produce no signal") and NOT (a single-input gate that says "produce the opposite of what the input is"), then any conceivable mathematical operation can be performed in binary.

So to make a water computer, you'd need to be able to build these two gates—presumably with drainage for overflow and an extra source. You'd also need to put it on a sloped hill so the water flowed downward (or high pressure) and a signal repeater—i.e., a way to keep the water flowing—if you ever wanted to build a loop that went up hill. The same can obviously be said of any other liquid. (Indeed, hydraulic computers are an old running joke from a comic called the Crunchly Saga.) Realistically these may offer some resilience gains over other types of computers, but are most likely only to be popular amongst very primitive peoples.

Beyond. And after that, what? If photonic and quantum computers aren't enough for your setting, you're pretty much free to invent physics. Some authors like inscribing optical circuitry in crystals (popularized perhaps in Stargate SG-1), which isn't particularly infeasible but requires some clever laser work to execute; others cram machinery into hyperspace or subspace, either to exploit four-dimensional geometry or merely to keep things out of the way in the material universe. If you do go for a higher-dimensional route, make sure you're safe from local faster-than-light traffic.

Biology offers two vectors for computation that are currently being explored: DNA computing and wetware computing. Both are, unavoidably, much slower than any of the electronic computers we use today, but offer considerable perks in storage density and redundancy.

DNA computers work by treating the DNA itself as a program, where alterations in the structure such as clipping the molecule are used to process computations. Some such computers, called biomolecular computers, invoke other types of biological molecules like proteins to do some of this work, although a pure DNA computer is possible, and DNA has been proven capable of operating like a Turing machine. These kinds of computers cannot easily exist inside of an existing organism, however, because they use DNA in such a different manner from normal cells; they'd quickly be encrusted in modifier proteins or destroyed as foreign. The appeal of DNA computing is primarily in how easy it is to achieve parallelism (many options explored simultaneously) and perhaps in the density of information storage.

Wetware computers are computers that are built from actual neurons. These have been long plumbed by science fiction authors, and were first explored seriously in the late nineties. As a neuron can interpret incoming signals as excitatory or inhibitory, they are functionally complete. Since the result is a neural network, the analogue computer can also be tuned to handle data slightly differently over time. However, this also means that computations are rarely if ever exact, that data storage is likely to be inefficient or error-prone, and that any complex analysis is likely to take much longer than an electronic system. Like biomolecular computers, wetware offers great potential for parallelism, but they can be grown easily and coexist in normal organisms (barring unexpected evolution.)

That being said, however, it is not, actually, a bad thing that wetware computers are imprecise—research has shown that the errors our brains make are extremely important to our success as a species. This stands to be of great value to anyone who wants to describe a convincing primitive artificial intelligence. More on that in part three.